Artificial Intelligence Nanodegree¶

Convolutional Neural Networks¶

Project: Write an Algorithm for a Dog Identification App¶

In this notebook, some template code has already been provided for you, and you will need to implement additional functionality to successfully complete this project. You will not need to modify the included code beyond what is requested. Sections that begin with '(IMPLEMENTATION)' in the header indicate that the following block of code will require additional functionality which you must provide. Instructions will be provided for each section, and the specifics of the implementation are marked in the code block with a 'TODO' statement. Please be sure to read the instructions carefully!

Note: Once you have completed all of the code implementations, you need to finalize your work by exporting the iPython Notebook as an HTML document. Before exporting the notebook to html, all of the code cells need to have been run so that reviewers can see the final implementation and output. You can then export the notebook by using the menu above and navigating to \n", "File -> Download as -> HTML (.html). Include the finished document along with this notebook as your submission.

In addition to implementing code, there will be questions that you must answer which relate to the project and your implementation. Each section where you will answer a question is preceded by a 'Question X' header. Carefully read each question and provide thorough answers in the following text boxes that begin with 'Answer:'. Your project submission will be evaluated based on your answers to each of the questions and the implementation you provide.

Note: Code and Markdown cells can be executed using the Shift + Enter keyboard shortcut. Markdown cells can be edited by double-clicking the cell to enter edit mode.

The rubric contains optional "Stand Out Suggestions" for enhancing the project beyond the minimum requirements. If you decide to pursue the "Stand Out Suggestions", you should include the code in this IPython notebook.

Why We're Here¶

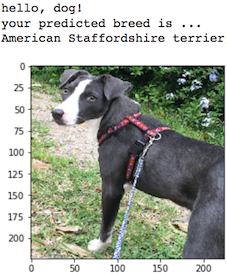

In this notebook, you will make the first steps towards developing an algorithm that could be used as part of a mobile or web app. At the end of this project, your code will accept any user-supplied image as input. If a dog is detected in the image, it will provide an estimate of the dog's breed. If a human is detected, it will provide an estimate of the dog breed that is most resembling. The image below displays potential sample output of your finished project (... but we expect that each student's algorithm will behave differently!).

In this real-world setting, you will need to piece together a series of models to perform different tasks; for instance, the algorithm that detects humans in an image will be different from the CNN that infers dog breed. There are many points of possible failure, and no perfect algorithm exists. Your imperfect solution will nonetheless create a fun user experience!

The Road Ahead¶

We break the notebook into separate steps. Feel free to use the links below to navigate the notebook.

- Step 0: Import Datasets

- Step 1: Detect Humans

- Step 2: Detect Dogs

- Step 3: Create a CNN to Classify Dog Breeds (from Scratch)

- Step 4: Use a CNN to Classify Dog Breeds (using Transfer Learning)

- Step 5: Create a CNN to Classify Dog Breeds (using Transfer Learning)

- Step 6: Write your Algorithm

- Step 7: Test Your Algorithm

Step 0: Import Datasets¶

Import Dog Dataset¶

In the code cell below, we import a dataset of dog images. We populate a few variables through the use of the load_files function from the scikit-learn library:

train_files,valid_files,test_files- numpy arrays containing file paths to imagestrain_targets,valid_targets,test_targets- numpy arrays containing onehot-encoded classification labelsdog_names- list of string-valued dog breed names for translating labels

from sklearn.datasets import load_files

from keras.utils import np_utils

import numpy as np

from glob import glob

# define function to load train, test, and validation datasets

def load_dataset(path):

data = load_files(path)

dog_files = np.array(data['filenames'])

dog_targets = np_utils.to_categorical(np.array(data['target']), 133)

return dog_files, dog_targets

# load train, test, and validation datasets

train_files, train_targets = load_dataset('dogImages/train')

valid_files, valid_targets = load_dataset('dogImages/valid')

test_files, test_targets = load_dataset('dogImages/test')

# load list of dog names

dog_names = [item[20:-1] for item in sorted(glob("dogImages/train/*/"))]

# print statistics about the dataset

print('There are %d total dog categories.' % len(dog_names))

print('There are %s total dog images.\n' % len(np.hstack([train_files, valid_files, test_files])))

print('There are %d training dog images.' % len(train_files))

print('There are %d validation dog images.' % len(valid_files))

print('There are %d test dog images.'% len(test_files))

Import Human Dataset¶

In the code cell below, we import a dataset of human images, where the file paths are stored in the numpy array human_files.

import random

random.seed(8675309)

# load filenames in shuffled human dataset

human_files = np.array(glob("lfw/*/*"))

random.shuffle(human_files)

# print statistics about the dataset

print('There are %d total human images.' % len(human_files))

Step 1: Detect Humans¶

We use OpenCV's implementation of Haar feature-based cascade classifiers to detect human faces in images. OpenCV provides many pre-trained face detectors, stored as XML files on github. We have downloaded one of these detectors and stored it in the haarcascades directory.

In the next code cell, we demonstrate how to use this detector to find human faces in a sample image.

import cv2

import matplotlib.pyplot as plt

%matplotlib inline

# extract pre-trained face detector

face_cascade = cv2.CascadeClassifier('haarcascades/haarcascade_frontalface_alt.xml')

# load color (BGR) image

img = cv2.imread(human_files[3])

# convert BGR image to grayscale

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# find faces in image

faces = face_cascade.detectMultiScale(gray)

# print number of faces detected in the image

print('Number of faces detected:', len(faces))

# get bounding box for each detected face

for (x,y,w,h) in faces:

# add bounding box to color image

cv2.rectangle(img,(x,y),(x+w,y+h),(255,0,0),2)

# convert BGR image to RGB for plotting

cv_rgb = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

# display the image, along with bounding box

plt.imshow(cv_rgb)

plt.show()

Before using any of the face detectors, it is standard procedure to convert the images to grayscale. The detectMultiScale function executes the classifier stored in face_cascade and takes the grayscale image as a parameter.

In the above code, faces is a numpy array of detected faces, where each row corresponds to a detected face. Each detected face is a 1D array with four entries that specifies the bounding box of the detected face. The first two entries in the array (extracted in the above code as x and y) specify the horizontal and vertical positions of the top left corner of the bounding box. The last two entries in the array (extracted here as w and h) specify the width and height of the box.

Write a Human Face Detector¶

We can use this procedure to write a function that returns True if a human face is detected in an image and False otherwise. This function, aptly named face_detector, takes a string-valued file path to an image as input and appears in the code block below.

# returns "True" if face is detected in image stored at img_path

def face_detector(img_path):

img = cv2.imread(img_path)

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

faces = face_cascade.detectMultiScale(gray)

return len(faces) > 0

(IMPLEMENTATION) Assess the Human Face Detector¶

Question 1: Use the code cell below to test the performance of the face_detector function.

- What percentage of the first 100 images in

human_fileshave a detected human face? - What percentage of the first 100 images in

dog_fileshave a detected human face?

Ideally, we would like 100% of human images with a detected face and 0% of dog images with a detected face. You will see that our algorithm falls short of this goal, but still gives acceptable performance. We extract the file paths for the first 100 images from each of the datasets and store them in the numpy arrays human_files_short and dog_files_short.

Answer:

At average the front face haar cascade does not detect 2 of the 100 test faces, mostly because people are looking sidewards, so the second ear can not be detected and in general the shading at which haar cascades look at such as the vertical highlight along the nose will most likely fail here.

On the other hand 11 of 100 dogs fullfil the front face haar cascades requirement, because they have at least relative similar shadings along the nose, below the eyes and at the ears.

human_files_short = human_files[:100]

dog_files_short = train_files[:100]

# Do NOT modify the code above this line.

from PIL import Image

class cv_detector:

"""Base class for boolean image classification of a given image file and using a customizable detector"""

def __init__(self):

"""Constructor"""

pass

def detect(self, file_name):

"""Detection function

file_name: The name of the file for which it shall be tested if it fullfills all requirements of this

detector

result: True if given image fullfills the requirements"""

return False

class face_haar_cascade_detector(cv_detector):

"""Class which detects if a given image shows a front face of a human or not"""

def detect(self, file_name):

# load color (BGR) image

img = cv2.imread(file_name)

# convert BGR image to grayscale

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# find faces in image

result = face_cascade.detectMultiScale(gray)

return img, True if len(result)>0 else False

def verify_haar_cascade(detector, assumed_result, file_name_list, max_log_count, max_size):

"""Verifies a haar cascasde using a list of image file names

detector: The object detector to be used

assumed_result: The result assumed (True = We assume the cascade detects at least one object)

file_name_list: The list of file names

max_log_count: The count of failed images to log

max_size: The maximum thumbnail size of a failed image to be logged

return: (Count of successful images with at least one detection, List of images where result mismatched assumed)"""

detection_count = 0

fail_images = []

for cur_file_name in file_name_list: # for all images, load image

image, result = detector.detect(cur_file_name)

detected = False

if result==True: # successfuly detected? count and remember

detected = True

detection_count += 1

if detected!=assumed_result: # not the assumed result? remember some fails to be able to log them later

if len(fail_images)<max_log_count:

image = Image.fromarray(cv2.cvtColor(image, cv2.COLOR_BGR2RGB))

max_img_dim = max(image.size)

if max_img_dim>max_size:

scaling = float(max_size)/max_img_dim

image = image.resize((int(scaling*image.size[0]), int(scaling*image.size[1])), Image.ANTIALIAS)

fail_images.append(image)

return detection_count, fail_images

def print_results(img_type_name, detected, count, fail_images, fail_count):

"""Logs the results of a verify_haar_cascade execution

img_type_name: A text which described the logged comparison test

detected: Count of detected objects

count: Total image count

fail_images: List of images where the detection failed

fail_count: Total count of fails"""

print("Detection rate of {:}: {:0.2f}% ({}/{})".format(img_type_name, float(detected)/count*100, detected, count))

print("{} fails".format(fail_count))

for epic_fail in fail_images:

display(epic_fail)

face_detector = face_haar_cascade_detector()

max_log_human_fails = 2 # maximum count of images of failed human detections to be logged

max_log_dog_fails = 2 # maximum count of images incorrectly detected as human face

max_log_img_size = 128 # Maximum size of a failed image's thumbnail in the log

humans_detected, human_fails = verify_haar_cascade(face_detector, True, human_files_short,

max_log_human_fails, max_log_img_size)

dogs_detected, dog_fails = verify_haar_cascade(face_detector, False, dog_files_short,

max_log_dog_fails, max_log_img_size)

print_results("human faces in human face images using front face haar hascade", humans_detected,

len(human_files_short), human_fails, len(human_files_short)-humans_detected)

print_results("human faces in dog images using front face haar hascade", dogs_detected,

len(dog_files_short), dog_fails, dogs_detected)

Question 2: This algorithmic choice necessitates that we communicate to the user that we accept human images only when they provide a clear view of a face (otherwise, we risk having unneccessarily frustrated users!). In your opinion, is this a reasonable expectation to pose on the user? If not, can you think of a way to detect humans in images that does not necessitate an image with a clearly presented face?

Answer:

I think today it still is, because people are used to it from many applications such as an eye based smartphone unlock to look straight into the camera. There are solutions though such as detecting eyes, ears and nose using other cascasdes or deep learning which can be far more tolerant than Viola Jones.

We suggest the face detector from OpenCV as a potential way to detect human images in your algorithm, but you are free to explore other approaches, especially approaches that make use of deep learning :). Please use the code cell below to design and test your own face detection algorithm. If you decide to pursue this optional task, report performance on each of the datasets.

Optional part - A human or a dog?¶

Because I noticed that the algorithm often fails for bearded people and because anyway a lot of human and dog pictures were provided along with this project, I decided to use this optional part for a convolutional network which detects the differences between humans and dogs instead of searching for humans or dogs independently.

The accuracy of the testing was was nearly 100%, there for the algorithm could not distinguish between a human and a car. In a version two I would add a third category "something else".

import keras

from PIL import Image

import numpy as np

from tqdm import tqdm

from keras.preprocessing import image

def dvh_path_to_tensor(img_path, tar_size=32):

"""Imports a single image and converts it to a sensor of shape (1,size,size,3)

img_path: The path to the image file

tar_size: The size to which the image shall be resized to match the networks requirements

return: The tensor"""

# loads RGB image as PIL.Image.Image type

img = image.load_img(img_path, target_size=(tar_size, tar_size))

x = image.img_to_array(img)

return np.expand_dims(x, axis=0)

def dvh_paths_to_tensor(img_paths, tar_size=32):

"""Imports a list of images and converts it to a sensor of shape (ImageCount,size,size,3)

img_paths: The list of image files

tar_size: The size to which the images shall be resized to match the networks requirements

return: The tensor"""

list_of_tensors = [dvh_path_to_tensor(img_path, tar_size=tar_size) for img_path in tqdm(img_paths)]

return np.vstack(list_of_tensors).astype('float32')/255

Loading and splitting of the training, validation and testing data¶

dvh_img_size = 128 # the input image size for the model

x_train = [] # our image

y_train = [] # our labels

x_test = [] # testing images

y_test = [] # testing labels

image_list = []

total_images = 3700 # Total count of images to train on (humans + dogs)

label_is_human = 0

label_is_dog = 1

# load given count of images, also switching between humans and dogs and setting the

# binary label to either human (label==0) or dog (label==1)

for i in range(total_images):

index = int(i/2)

fn = human_files[index] if i%2==0 else train_files[index]

y_train.append(1 if i%2==0 else 0)

image_list.append(fn)

x_train = dvh_paths_to_tensor(image_list, tar_size = dvh_img_size)

y_train = np.array(y_train)

# Split training and testing data

train_split = int(len(x_train)*9/10)

x_train, x_test = np.split(x_train, [train_split])

y_train, y_test = np.split(y_train, [train_split])

print("{} training images".format(len(x_train)))

print("{} testing images".format(len(x_test)))

from keras.utils import np_utils

import numpy as np

import matplotlib.pyplot as plt

from scipy.misc import toimage

import PIL

%matplotlib inline

# one-hot encode the labels

dvh_num_classes = len(np.unique(y_train))

y_train = keras.utils.to_categorical(y_train, dvh_num_classes)

y_test = keras.utils.to_categorical(y_test, dvh_num_classes)

print(str(dvh_num_classes)+ " total classes")

train_split = (int)(total_images/10)

# split training and and validation data

(x_train, x_valid) = x_train[train_split:], x_train[:train_split]

(y_train, y_valid) = y_train[train_split:], y_train[:train_split]

print(x_train.shape[0], 'train samples')

print(x_test.shape[0], 'test samples')

print(x_valid.shape[0], 'validation samples')

Dog Vs Human - Training image examples¶

fig = plt.figure(figsize=(40,10))

for i in range(36):

ax = fig.add_subplot(3, 12, i + 1, xticks=[], yticks=[])

cur_org = x_train[i]

ci = cur_org.squeeze()

ax.imshow(ci)

Dog Vs Human - Validation image examples¶

fig = plt.figure(figsize=(40,10))

for i in range(36):

ax = fig.add_subplot(3, 12, i + 1, xticks=[], yticks=[])

cur_org = x_valid[i]

ci = cur_org.squeeze()

ax.imshow(ci)

Dog Vs Human - Model definition¶

I decided to reuse the successful, batch normalization based approach which was the result of Question 4.

It consists of 3 up to 5 convolution layers, depending on the size of the input image. The input data and the result of each max pooling will be batch normalized and the final convolutional layer will then be global average pooled and afterwards binary classified between humans (0~=1) and dogs (1~=1)

from keras.layers import Conv2D, MaxPooling2D, GlobalAveragePooling2D

from keras.layers import Dropout, Flatten, Dense

from keras.models import Sequential

from keras.layers.normalization import BatchNormalization

from keras.callbacks import ModelCheckpoint

dvh_model = Sequential()

# input is a tensor of shape size, size, 3

dvh_model.add(BatchNormalization(input_shape=(dvh_img_size, dvh_img_size, 3)))

# 3 up 5 identical setups of 3x3 convolution, max pooling and batch normalization

dvh_model.add(Conv2D(filters=16, kernel_size=3, activation='relu'))

dvh_model.add(MaxPooling2D(pool_size=2))

dvh_model.add(BatchNormalization())

dvh_model.add(Conv2D(filters=32, kernel_size=3, activation='relu'))

dvh_model.add(MaxPooling2D(pool_size=2))

dvh_model.add(BatchNormalization())

dvh_model.add(Conv2D(filters=64, kernel_size=3, activation='relu'))

dvh_model.add(MaxPooling2D(pool_size=2))

dvh_model.add(BatchNormalization())

if(dvh_img_size>=64):

dvh_model.add(Conv2D(filters=128, kernel_size=3, activation='relu'))

dvh_model.add(MaxPooling2D(pool_size=2))

dvh_model.add(BatchNormalization())

if(dvh_img_size>=128):

dvh_model.add(Conv2D(filters=256, kernel_size=3, activation='relu'))

dvh_model.add(MaxPooling2D(pool_size=2))

dvh_model.add(BatchNormalization())

dvh_model.add(GlobalAveragePooling2D())

# Result is binary, so we dense it down to 2, (1 0) = human, (0 1) = dog

dvh_model.add(Dense(2, activation='softmax'))

dvh_model.summary()

dvh_model.compile(optimizer='rmsprop', loss='binary_crossentropy', metrics=['accuracy'])

# train the model

checkpointer = ModelCheckpoint(filepath='saved_models/model.weights.dogs_vs_humans.hdf5', verbose=1,

save_best_only=True)

dvh_model.fit(x_train, y_train, batch_size=32, epochs=5,

validation_data=(x_valid, y_valid), callbacks=[checkpointer],

verbose=2, shuffle=True)

dvh_model.load_weights('saved_models/model.weights.dogs_vs_humans.hdf5')

# get index of predicted dog breed for each image in test set

custom_predictions = [np.argmax(dvh_model.predict(np.expand_dims(feature, axis=0))) for feature in x_test]

# report test accuracy

test_accuracy = 100*np.sum(np.array(custom_predictions)==np.argmax(y_test, axis=1))/len(custom_predictions)

print('Test accuracy: %.4f%%' % test_accuracy)

Human or Dog - Final showndown¶

test_images = []

# select 50 human and 50 dog images and shuffle them afterwards

for index in range(50):

test_images.append(human_files_short[index])

for index in range(50):

test_images.append(dog_files_short[index])

random.shuffle(test_images)

# load and convert them to tensors

test_image_tensor = dvh_paths_to_tensor(test_images, tar_size = dvh_img_size)

fig = plt.figure(figsize=(20,40))

example_count = 24

# predict each image's type and print it's image and prediction to the grid

for example_index in range(example_count):

example_image = test_image_tensor[example_index]

expanded_image = np.expand_dims(example_image, axis=0)

predict = dvh_model.predict(expanded_image)

type_index = np.argmax(predict)

likeliness = predict[0][type_index]

result = "{} ({:0.2f}% ".format("Human" if type_index==1 else "Dog", float(likeliness*100))

ax = fig.add_subplot(example_count, 4, example_index+1, title=result)

ax.imshow(example_image.squeeze())

Step 2: Detect Dogs¶

In this section, we use a pre-trained ResNet-50 model to detect dogs in images. Our first line of code downloads the ResNet-50 model, along with weights that have been trained on ImageNet, a very large, very popular dataset used for image classification and other vision tasks. ImageNet contains over 10 million URLs, each linking to an image containing an object from one of 1000 categories. Given an image, this pre-trained ResNet-50 model returns a prediction (derived from the available categories in ImageNet) for the object that is contained in the image.

from keras.applications.resnet50 import ResNet50

# define ResNet50 model

ResNet50_model = ResNet50(weights='imagenet')

Pre-process the Data¶

When using TensorFlow as backend, Keras CNNs require a 4D array (which we'll also refer to as a 4D tensor) as input, with shape

$$ (\text{nb_samples}, \text{rows}, \text{columns}, \text{channels}), $$

where nb_samples corresponds to the total number of images (or samples), and rows, columns, and channels correspond to the number of rows, columns, and channels for each image, respectively.

The path_to_tensor function below takes a string-valued file path to a color image as input and returns a 4D tensor suitable for supplying to a Keras CNN. The function first loads the image and resizes it to a square image that is $224 \times 224$ pixels. Next, the image is converted to an array, which is then resized to a 4D tensor. In this case, since we are working with color images, each image has three channels. Likewise, since we are processing a single image (or sample), the returned tensor will always have shape

$$ (1, 224, 224, 3). $$

The paths_to_tensor function takes a numpy array of string-valued image paths as input and returns a 4D tensor with shape

$$ (\text{nb_samples}, 224, 224, 3). $$

Here, nb_samples is the number of samples, or number of images, in the supplied array of image paths. It is best to think of nb_samples as the number of 3D tensors (where each 3D tensor corresponds to a different image) in your dataset!

from keras.preprocessing import image

from tqdm import tqdm

def path_to_tensor(img_path):

# loads RGB image as PIL.Image.Image type

img = image.load_img(img_path, target_size=(224, 224))

# convert PIL.Image.Image type to 3D tensor with shape (224, 224, 3)

x = image.img_to_array(img)

# convert 3D tensor to 4D tensor with shape (1, 224, 224, 3) and return 4D tensor

return np.expand_dims(x, axis=0)

def paths_to_tensor(img_paths):

list_of_tensors = [path_to_tensor(img_path) for img_path in tqdm(img_paths)]

return np.vstack(list_of_tensors)

Making Predictions with ResNet-50¶

Getting the 4D tensor ready for ResNet-50, and for any other pre-trained model in Keras, requires some additional processing. First, the RGB image is converted to BGR by reordering the channels. All pre-trained models have the additional normalization step that the mean pixel (expressed in RGB as $[103.939, 116.779, 123.68]$ and calculated from all pixels in all images in ImageNet) must be subtracted from every pixel in each image. This is implemented in the imported function preprocess_input. If you're curious, you can check the code for preprocess_input here.

Now that we have a way to format our image for supplying to ResNet-50, we are now ready to use the model to extract the predictions. This is accomplished with the predict method, which returns an array whose $i$-th entry is the model's predicted probability that the image belongs to the $i$-th ImageNet category. This is implemented in the ResNet50_predict_labels function below.

By taking the argmax of the predicted probability vector, we obtain an integer corresponding to the model's predicted object class, which we can identify with an object category through the use of this dictionary.

from keras.applications.resnet50 import preprocess_input, decode_predictions

def ResNet50_predict_labels(img_path):

# returns prediction vector for image located at img_path

img = preprocess_input(path_to_tensor(img_path))

return np.argmax(ResNet50_model.predict(img))

Write a Dog Detector¶

While looking at the dictionary, you will notice that the categories corresponding to dogs appear in an uninterrupted sequence and correspond to dictionary keys 151-268, inclusive, to include all categories from 'Chihuahua' to 'Mexican hairless'. Thus, in order to check to see if an image is predicted to contain a dog by the pre-trained ResNet-50 model, we need only check if the ResNet50_predict_labels function above returns a value between 151 and 268 (inclusive).

We use these ideas to complete the dog_detector function below, which returns True if a dog is detected in an image (and False if not).

### returns "True" if a dog is detected in the image stored at img_path

def dog_detector(img_path):

prediction = ResNet50_predict_labels(img_path)

return ((prediction <= 268) & (prediction >= 151))

(IMPLEMENTATION) Assess the Dog Detector¶

Question 3: Use the code cell below to test the performance of your dog_detector function.

- What percentage of the images in

human_files_shorthave a detected dog? - What percentage of the images in

dog_files_shorthave a detected dog?

Answer:

Dogs were with a 100% rate detected as dog. A human sometimes (about 1%) got accidentally detected as a dog.

### TODO: Test the performance of the dog_detector function

### on the images in human_files_short and dog_files_short.

class dog_detector_class(cv_detector):

"""

def detect(self, file_name):

img = cv2.imread(file_name)

result = dog_detector(file_name)

return img, result

dog_detect = dog_detector_class()

humans_detected, human_fails = verify_haar_cascade(dog_detect, False, human_files_short,

max_log_human_fails, max_log_img_size)

dogs_detected, dog_fails = verify_haar_cascade(dog_detect, True, dog_files_short,

max_log_dog_fails, max_log_img_size)

print_results("dogs in human face images using ResNet 50 labels for dogs", humans_detected,

len(human_files_short), human_fails, humans_detected)

print_results("dogs in dog images using ResNet 50 labels for dogs", dogs_detected,

len(dog_files_short), dog_fails, len(dog_files_short)-dogs_detected)

Step 3: Create a CNN to Classify Dog Breeds (from Scratch)¶

Now that we have functions for detecting humans and dogs in images, we need a way to predict breed from images. In this step, you will create a CNN that classifies dog breeds. You must create your CNN from scratch (so, you can't use transfer learning yet!), and you must attain a test accuracy of at least 1%. In Step 5 of this notebook, you will have the opportunity to use transfer learning to create a CNN that attains greatly improved accuracy.

Be careful with adding too many trainable layers! More parameters means longer training, which means you are more likely to need a GPU to accelerate the training process. Thankfully, Keras provides a handy estimate of the time that each epoch is likely to take; you can extrapolate this estimate to figure out how long it will take for your algorithm to train.

We mention that the task of assigning breed to dogs from images is considered exceptionally challenging. To see why, consider that even a human would have great difficulty in distinguishing between a Brittany and a Welsh Springer Spaniel.

| Brittany | Welsh Springer Spaniel |

|---|---|

|

|

It is not difficult to find other dog breed pairs with minimal inter-class variation (for instance, Curly-Coated Retrievers and American Water Spaniels).

| Curly-Coated Retriever | American Water Spaniel |

|---|---|

|

|

Likewise, recall that labradors come in yellow, chocolate, and black. Your vision-based algorithm will have to conquer this high intra-class variation to determine how to classify all of these different shades as the same breed.

| Yellow Labrador | Chocolate Labrador | Black Labrador |

|---|---|---|

|

|

|

We also mention that random chance presents an exceptionally low bar: setting aside the fact that the classes are slightly imabalanced, a random guess will provide a correct answer roughly 1 in 133 times, which corresponds to an accuracy of less than 1%.

Remember that the practice is far ahead of the theory in deep learning. Experiment with many different architectures, and trust your intuition. And, of course, have fun!

Pre-process the Data¶

We rescale the images by dividing every pixel in every image by 255.

from PIL import ImageFile

ImageFile.LOAD_TRUNCATED_IMAGES = True

# pre-process the data for Keras

train_tensors = paths_to_tensor(train_files).astype('float32')/255

valid_tensors = paths_to_tensor(valid_files).astype('float32')/255

test_tensors = paths_to_tensor(test_files).astype('float32')/255

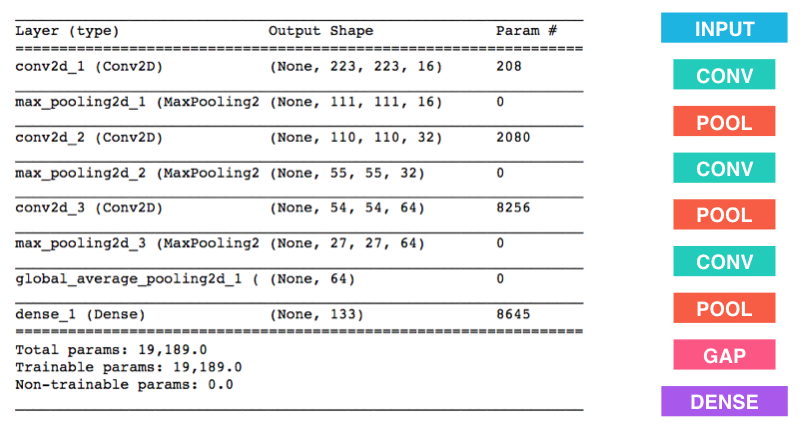

(IMPLEMENTATION) Model Architecture¶

Create a CNN to classify dog breed. At the end of your code cell block, summarize the layers of your model by executing the line:

model.summary()

We have imported some Python modules to get you started, but feel free to import as many modules as you need. If you end up getting stuck, here's a hint that specifies a model that trains relatively fast on CPU and attains >1% test accuracy in 5 epochs:

Question 4: Outline the steps you took to get to your final CNN architecture and your reasoning at each step. If you chose to use the hinted architecture above, describe why you think that CNN architecture should work well for the image classification task.

Answer:

I invested a lot of time (nearly a week) for this step of the project, on the one hand to optimize the training process of the model above, but majorly to get an understanding and feeling about which modification has which positive or negative impact on the final result:

- The possible solution above with a 2x2 kernel led to 2.6% accuracy

- The possible solution above with a 3x3 kernel to 3.1%. In other tests I also experienced that 3x3 works fine in general as it's able to represent combination for layer 0 features such as line directions in different rotations very well as well as combining these to layer 1 and layer 2 features such as partial or whole circles.

- Then I added a second fully connected layer which increased the chance to 3.2%... so not really much. Afterwards I tried a fourth convolution layer instead which increased the chance to 3.5% --> also just a small step, but better :)

- After watching the video from Matthew Zeiler showing insights of a CNNs behavior I wasn't really surprised by this, so I added a 5th convolution layer, now 3x3 for 16, 32, 64, 128 and 256 features, a 2x2 pooling after each and finished by a GAP which let to slowly satisfying: 7%

- I experimented with two dozen similar configurations after that over a couple of days, with or without more fully connected layers, with Flatten instead of GAP etc. and reached about 10% at the end.

- I then read at deeplearning.ai from Andrew Ng about batchwise normalization of the input data of each next layer which shall heavily increase the learning speed of CNNs and as positive side effect also slightly increases the regualization and covariance shift.

- It's implementation changed the result tremedous from the previous 10% to now nearly 30% by just adding the normalization layers.

- I tried to further improve the model/network but without success, depending on the weight initialization luck it was sometimes 29%, sometimes 31% but never really much more or less

- I then (as proposed) also implemented image augmentation which still added another 8% to the accuracy with a final result of 43% accuracy with this "from scratch", 5+1 layer final solution.

It was really a great experience :).

from keras.layers import Conv2D, MaxPooling2D, GlobalAveragePooling2D

from keras.layers import Dropout, Flatten, Dense

from keras.models import Sequential

from keras.layers.normalization import BatchNormalization

augment = True

model = Sequential()

model.add(BatchNormalization(input_shape=(224, 224, 3)))

model.add(Conv2D(filters=16, kernel_size=3, activation='relu'))

model.add(MaxPooling2D(pool_size=2))

model.add(BatchNormalization())

model.add(Conv2D(filters=32, kernel_size=3, activation='relu'))

model.add(MaxPooling2D(pool_size=2))

model.add(BatchNormalization())

model.add(Conv2D(filters=64, kernel_size=3, activation='relu'))

model.add(MaxPooling2D(pool_size=2))

model.add(BatchNormalization())

model.add(Conv2D(filters=128, kernel_size=3, activation='relu'))

model.add(MaxPooling2D(pool_size=2))

model.add(BatchNormalization())

model.add(Conv2D(filters=256, kernel_size=3, activation='relu'))

model.add(MaxPooling2D(pool_size=2))

model.add(BatchNormalization())

model.add(GlobalAveragePooling2D())

model.add(Dense(133, activation='softmax'))

model.summary()

Compile the Model¶

model.compile(optimizer='rmsprop', loss='categorical_crossentropy', metrics=['accuracy'])

(IMPLEMENTATION) Train the Model¶

Train your model in the code cell below. Use model checkpointing to save the model that attains the best validation loss.

You are welcome to augment the training data, but this is not a requirement.

Augment images¶

from keras.preprocessing.image import ImageDataGenerator

if augment==True:

# create and configure augmented image generator

datagen_train = ImageDataGenerator(width_shift_range=0.1, height_shift_range=0.1, horizontal_flip=True)

# fit augmented image generator on data

datagen_train.fit(train_tensors)

# create and configure augmented image generator

datagen_valid = ImageDataGenerator(width_shift_range=0.1, height_shift_range=0.1, horizontal_flip=True)

# fit augmented image generator on data

datagen_valid.fit(valid_tensors)

from keras.callbacks import ModelCheckpoint

### TODO: specify the number of epochs that you would like to use to train the model.

epochs = 20

batch_size = 20

### Do NOT modify the code below this line.

### Note: Sorry but I had to implemented the augmentation below that line

checkpointer = ModelCheckpoint(filepath='saved_models/weights.best.from_scratch.hdf5',

verbose=1, save_best_only=True)

if augment==True:

print("Fitting model using image augmentation...")

model.fit_generator(datagen_train.flow(train_tensors, train_targets, batch_size=batch_size),

steps_per_epoch=train_tensors.shape[0] // batch_size,

epochs=epochs, verbose=2, callbacks=[checkpointer],

validation_data=datagen_valid.flow(valid_tensors, valid_targets, batch_size=batch_size),

validation_steps=valid_tensors.shape[0] // batch_size)

else:

model.fit(train_tensors, train_targets, validation_data=(valid_tensors, valid_targets),

epochs=epochs, batch_size=20, callbacks=[checkpointer], verbose=1)

Load the Model with the Best Validation Loss¶

model.load_weights('saved_models/weights.best.from_scratch.hdf5')

Test the Model¶

Try out your model on the test dataset of dog images. Ensure that your test accuracy is greater than 1%.

# get index of predicted dog breed for each image in test set

dog_breed_predictions = [np.argmax(model.predict(np.expand_dims(tensor, axis=0))) for tensor in test_tensors]

# report test accuracy

test_accuracy = 100*np.sum(np.array(dog_breed_predictions)==np.argmax(test_targets, axis=1))/len(dog_breed_predictions)

print('Test accuracy: %.4f%%' % test_accuracy)

bottleneck_features = np.load('bottleneck_features/DogVGG16Data.npz')

train_VGG16 = bottleneck_features['train']

valid_VGG16 = bottleneck_features['valid']

test_VGG16 = bottleneck_features['test']

Model Architecture¶

The model uses the the pre-trained VGG-16 model as a fixed feature extractor, where the last convolutional output of VGG-16 is fed as input to our model. We only add a global average pooling layer and a fully connected layer, where the latter contains one node for each dog category and is equipped with a softmax.

VGG16_model = Sequential()

VGG16_model.add(GlobalAveragePooling2D(input_shape=train_VGG16.shape[1:]))

VGG16_model.add(Dense(133, activation='softmax'))

VGG16_model.summary()

Compile the Model¶

VGG16_model.compile(loss='categorical_crossentropy', optimizer='rmsprop', metrics=['accuracy'])

Train the Model¶

checkpointer = ModelCheckpoint(filepath='saved_models/weights.best.VGG16.hdf5',

verbose=1, save_best_only=True)

VGG16_model.fit(train_VGG16, train_targets,

validation_data=(valid_VGG16, valid_targets),

epochs=20, batch_size=20, callbacks=[checkpointer], verbose=1)

Load the Model with the Best Validation Loss¶

VGG16_model.load_weights('saved_models/weights.best.VGG16.hdf5')

Test the Model¶

Now, we can use the CNN to test how well it identifies breed within our test dataset of dog images. We print the test accuracy below.

# get index of predicted dog breed for each image in test set

VGG16_predictions = [np.argmax(VGG16_model.predict(np.expand_dims(feature, axis=0))) for feature in test_VGG16]

# report test accuracy

test_accuracy = 100*np.sum(np.array(VGG16_predictions)==np.argmax(test_targets, axis=1))/len(VGG16_predictions)

print('Test accuracy: %.4f%%' % test_accuracy)

Predict Dog Breed with the Model¶

from extract_bottleneck_features import *

def VGG16_predict_breed(img_path):

# extract bottleneck features

bottleneck_feature = extract_VGG16(path_to_tensor(img_path))

# obtain predicted vector

predicted_vector = VGG16_model.predict(bottleneck_feature)

# return dog breed that is predicted by the model

return dog_names[np.argmax(predicted_vector)]

Step 5: Create a CNN to Classify Dog Breeds (using Transfer Learning)¶

You will now use transfer learning to create a CNN that can identify dog breed from images. Your CNN must attain at least 60% accuracy on the test set.

In Step 4, we used transfer learning to create a CNN using VGG-16 bottleneck features. In this section, you must use the bottleneck features from a different pre-trained model. To make things easier for you, we have pre-computed the features for all of the networks that are currently available in Keras:

- VGG-19 bottleneck features

- ResNet-50 bottleneck features

- Inception bottleneck features

- Xception bottleneck features

The files are encoded as such:

Dog{network}Data.npz

where {network}, in the above filename, can be one of VGG19, Resnet50, InceptionV3, or Xception. Pick one of the above architectures, download the corresponding bottleneck features, and store the downloaded file in the bottleneck_features/ folder in the repository.

(IMPLEMENTATION) Obtain Bottleneck Features¶

In the code block below, extract the bottleneck features corresponding to the train, test, and validation sets by running the following:

bottleneck_features = np.load('bottleneck_features/Dog{network}Data.npz')

train_{network} = bottleneck_features['train']

valid_{network} = bottleneck_features['valid']

test_{network} = bottleneck_features['test']### TODO: Obtain bottleneck features from another pre-trained CNN.

from keras.layers import Conv2D, MaxPooling2D, GlobalAveragePooling2D

from keras.layers import Dropout, Flatten, Dense

from keras.models import Sequential

from keras.layers.normalization import BatchNormalization

from keras.callbacks import ModelCheckpoint

# definition of model indices

model_type_VGG16 = 0

model_type_VGG19 = 1

model_type_ResNet50 = 2

model_type_Inception = 3

model_type_XCeption = 4

# model (file)names

model_names = ["VGG16", "VGG19", "Resnet50", "InceptionV3", "Xception"]

# we are using Resnet 50

selected_model_index = model_type_ResNet50

selected_model_name = model_names[selected_model_index]

print("Selected model: {}".format(selected_model_name))

# load features from disk for the selected model type

bottleneck_features_custom = np.load('bottleneck_features/Dog{}Data.npz'.format(selected_model_name))

train_custom = bottleneck_features_custom['train']

valid_custom = bottleneck_features_custom['valid']

test_custom = bottleneck_features_custom['test']

(IMPLEMENTATION) Model Architecture¶

Create a CNN to classify dog breed. At the end of your code cell block, summarize the layers of your model by executing the line:

<your model's name>.summary()

Question 5: Outline the steps you took to get to your final CNN architecture and your reasoning at each step. Describe why you think the architecture is suitable for the current problem.

Answer:

I visited a couple of benchmark pages such as https://github.com/jcjohnson/cnn-benchmarks which led to furter details about the structure of each of the networks provided here. I decided to use ResNet 50 because it promised the best end accuracy and the performance did not differ a lot.

The model itself was more or less straight forward then as we only still had to use the tremedous amonunt of features which Resnet50 already "highlights" for us and to dense them down to our 133 dog categories, optimized as before via cat crossentropy. Rmsprop worked well for this as for the previous examples.

custom_model = Sequential()

custom_model.add(GlobalAveragePooling2D(input_shape=train_custom.shape[1:]))

custom_model.add(Dense(133, activation='softmax'))

custom_model.summary()

(IMPLEMENTATION) Compile the Model¶

custom_model.compile(loss='categorical_crossentropy', optimizer='rmsprop', metrics=['accuracy'])

(IMPLEMENTATION) Train the Model¶

Train your model in the code cell below. Use model checkpointing to save the model that attains the best validation loss.

You are welcome to augment the training data, but this is not a requirement.

checkpointer_custom = ModelCheckpoint(filepath='saved_models/weights.best.custom.hdf5',

verbose=1, save_best_only=True)

custom_model.fit(train_custom, train_targets,

validation_data=(valid_custom, valid_targets),

epochs=5, batch_size=20, callbacks=[checkpointer_custom], verbose=1)

(IMPLEMENTATION) Load the Model with the Best Validation Loss¶

custom_model.load_weights('saved_models/weights.best.custom.hdf5')

(IMPLEMENTATION) Test the Model¶

Try out your model on the test dataset of dog images. Ensure that your test accuracy is greater than 60%.

# get index of predicted dog breed for each image in test set

custom_predictions = [np.argmax(custom_model.predict(np.expand_dims(feature, axis=0))) for feature in test_custom]

# report test accuracy

test_accuracy = 100*np.sum(np.array(custom_predictions)==np.argmax(test_targets, axis=1))/len(custom_predictions)

print('Test accuracy: %.4f%%' % test_accuracy)

(IMPLEMENTATION) Predict Dog Breed with the Model¶

Write a function that takes an image path as input and returns the dog breed (Affenpinscher, Afghan_hound, etc) that is predicted by your model.

Similar to the analogous function in Step 5, your function should have three steps:

- Extract the bottleneck features corresponding to the chosen CNN model.

- Supply the bottleneck features as input to the model to return the predicted vector. Note that the argmax of this prediction vector gives the index of the predicted dog breed.

- Use the

dog_namesarray defined in Step 0 of this notebook to return the corresponding breed.

The functions to extract the bottleneck features can be found in extract_bottleneck_features.py, and they have been imported in an earlier code cell. To obtain the bottleneck features corresponding to your chosen CNN architecture, you need to use the function

extract_{network}

where {network}, in the above filename, should be one of VGG19, Resnet50, InceptionV3, or Xception.

def detect_breed(path):

# load features

bottleneck_features = \

extract_VGG16(path_to_tensor(path)) if selected_model_index==model_type_VGG16 else \

extract_VGG19(path_to_tensor(path)) if selected_model_index==model_type_VGG19 else \

extract_Resnet50(path_to_tensor(path)) if selected_model_index==model_type_ResNet50 else \

extract_InceptionV3(path_to_tensor(path)) if selected_model_index==model_type_Inception else \

extract_Xception(path_to_tensor(path)) if selected_model_index==model_type_XCeption else None

# predict breed

prediction = custom_model.predict(bottleneck_features)

# return predicted breed

return dog_names[np.argmax(prediction)], np.max(prediction)

Step 6: Write your Algorithm¶

Write an algorithm that accepts a file path to an image and first determines whether the image contains a human, dog, or neither. Then,

- if a dog is detected in the image, return the predicted breed.

- if a human is detected in the image, return the resembling dog breed.

- if neither is detected in the image, provide output that indicates an error.

You are welcome to write your own functions for detecting humans and dogs in images, but feel free to use the face_detector and dog_detector functions developed above. You are required to use your CNN from Step 5 to predict dog breed.

Some sample output for our algorithm is provided below, but feel free to design your own user experience!

(IMPLEMENTATION) Write your Algorithm¶

def detect_breed_with_image(img_path):

breed, likeliness = detect_breed(img_path)

image = cv2.cvtColor(cv2.imread(img_path), cv2.COLOR_BGR2RGB)

plt.imshow(image)

plt.show()

if dog_detector(img_path):

print("It's a {} (I'm {:0.2f}% sure about that)".format(breed, likeliness*100))

elif face_detector.detect(img_path):

print("It's a human which looks like a {}".format(breed))

else:

print("Epic fail :(")

detect_breed_with_image(random.choice(train_files))

detect_breed_with_image(random.choice(train_files))

detect_breed_with_image(random.choice(train_files))

detect_breed_with_image(random.choice(train_files))

Step 7: Test Your Algorithm¶

In this section, you will take your new algorithm for a spin! What kind of dog does the algorithm think that you look like? If you have a dog, does it predict your dog's breed accurately? If you have a cat, does it mistakenly think that your cat is a dog?

(IMPLEMENTATION) Test Your Algorithm on Sample Images!¶

Test your algorithm at least six images on your computer. Feel free to use any images you like. Use at least two human and two dog images.

Question 6: Is the output better than you expected :) ? Or worse :( ? Provide at least three possible points of improvement for your algorithm.

Answer:

I'm very positively impressed about how precise the algorithm detects the breed even in more complex pictures like the one with the waves below. Also it's interesting that (likely) due to the pooling I am completely ignored in the picture below and how the dog features, the algorithm is searching for, dominate and results in a certainty of 99.98% even if a human and a dog are in the same picture.

Potential ways of further improvement:

- The input data of the training could have been augmented before which had slightly increased the accuracy.

- The algoritm fails for cross breeds as shown below. We could guess which breeds were involved. It's great that in this case the algorithm was just 56% sure and detected it's own failure.

- There are many very similar looking breeds out there which just differ in size. Every human would instantly know that it can't be a pure Giant Schnauzer, the same it's in case of a Welsh and an Airedale Terrier. If another network such as Cifar would search for objects nearby it could guess the size of the object.

- The algorithm sometimes fails for beards. Because of that I wrote a pure dog vs human qualifier in the optional part above which searchs for the important differences between dogs and humans instead of just looking for dogs or humans.

detect_breed_with_image("custom/PunktMichael.jpg")

detect_breed_with_image("custom/Punkt.jpg")

detect_breed_with_image("custom/PunktWaves.png")

detect_breed_with_image("custom/ShipDog.JPG")

detect_breed_with_image("custom/Maximilian.png")

detect_breed_with_image("custom/MiMallorca.png")

detect_breed_with_image("custom/Johannes_DLND.jpg")